credit:https://iosband.github.io/2015/07/19/Efficient-experimentation-and-multi-armed-bandits.html

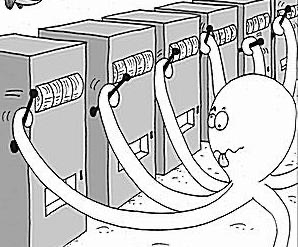

At first, multi-armed bandit means using

\(f^* : \mathcal{X} \rightarrow \mathbb{R}\)

- Each arm \(i\) pays out 1 dollar with probability \(p_i\) if it is played; otherwise it pays out nothing.

- While the \(p_1,…,p_k\) are fixed, we don’t know any of their values.

- Each timestep \(t\) we pick a single arm \(a_t\) to play.

- Based on our choice, we receive a return of \(r_t \sim Ber(p_{a_t})\).

- ##How should we choose arms so as to maximize total expected return?##