文章目录[隐藏]

This document will be updated in wiki

- CCD interconnect Metrics

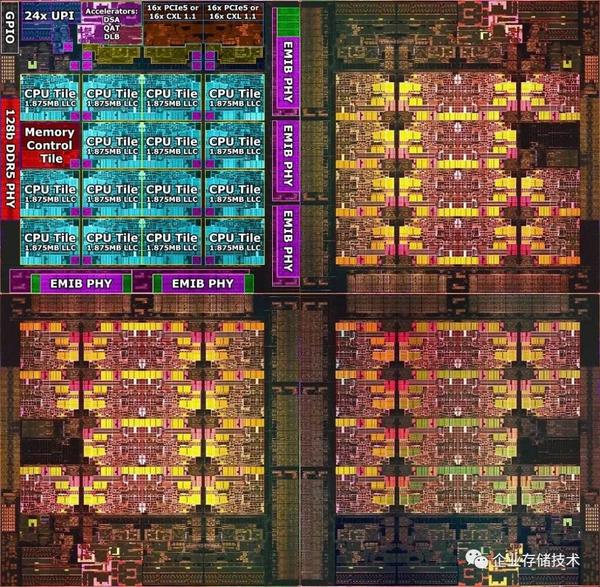

- Since the interconnect of the CCDs is EMIB, and the cache coherency is still directory routing from LLC to HBM and Memory, the PMU has operands DRd/CRd and abstraction of TOR and IA to record the metrics.

- All CHA Uncore PMU starts with UNC_CHA_TOR_INSERTS, those can be labeled as CHA tile 0x2000-0x2180 for a 23-core machine, which maps to each tile's 2/3/4/5 are control registers and 8/9/a/b are result registers. It's easy to get cha id mapping to os id through unc memory bandwidth write.

- Every local CCD mesh has 3 UPIs, 3 memory port and 1 PCIe &CXL port. There are three types of traffic IIO/PCIe&CXL/UPI. From 0x3000-0x30b0, Only 1 has value(because there's no PCIe device attached) on my machine for PCIe1-4 IIO 1-4, which has 4 control registers, which maps to each tile's 2/3/4/5 are control register and 8/9/a/b are result registers. From 0x3400-0x34b0, there are only 6 values one my machine, which I think should be UPI and iMC ports. All of those only have 2 control registers, 2/3 are control registers and 8/9 are result registers.

- CXL Traffic Metrics

- I think the current PMU is not fully renamed to the CXL.cache but embedded in the core's metrics, like hitm, miss snoop_none, etc.

- OCR.READS_TO_CORE.L3_MISS_LOCAL_SOCKET. This metric will count all the code read and RFO that miss the L3 but request to local CXL Type 2 or private DRAM. Remote requests will count toward the remote counterpart metric.

- Some guesses

- HBM2E serves as a non-inclusive directory-based directly mapped L4 cache(manifested as HBM M2M(mesh to memory) blocks and EDC/HBM channels) like persistent memory using the extra tag for caching. It can cache some of the CXL.cache requests if LLC each CCD is not big enough.

- AMD supports CXL2.0's RAS and gpf, while Intel gives it up because 1. gpf is useless, 2. it's the outer fabric manager's work, and intel puts the efforts into vendors.